Micro Optimisations

I was recently involved in a project where I was reminded of a typical scenario in today's IT environments that still baffles me...

There are many different schools of thought when it comes to how and when to apply code optimisations. Some people would have you believe code should be optimised from the start. Others believe in getting it functional first, then optimising.

This blog is not about how to apply optimisations and the bigger paradigm of when and how to most effectively incorporate it in to a project. It is rather about that scenario where code is functional, now you are looking at ways to improve the performance.

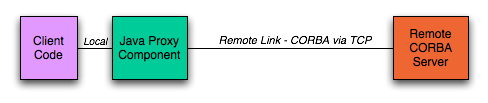

I wrote a Java component for this company. Trying to eliminate lawsuits etc. (suffice to say they make much more money that I do) I am deliberately vague. This component communicates with a backend system using CORBA. The client component and CORBA server is about 1500km apart. There is obviously not a high speed link between these two entities (well, I assume based on testing the link would not be much faster than a 1-2Mbps line).

So the setup is as follows:

The code I was responsible for is marked by the green block. A code review was done (which is always in my mind a Good Thing). The issues related to optimisations that came out follow:

- Use iterators instead of for loops. Iterators are safer and faster.

- Don't use synchronised since it is better if a client sees a random error than if the whole system is slower due to its performance impact.

- Never use static methods or data since their access is slower than instance variables/methods on certain multiprocessor systems.

- A method parameter names XXX of type String should not be used in the for loop as for (int i=0;i<XXX.length();i++) but the value of XXX.length() should rather be assigned to a local variable.

- etc.

Except for point 1,2 and 3 above all are valid, correct concerns. (Except 1,2,3 since Iterators are actually MUCH slower than for loops, synchronisation is absolutely essential to provide system correctness and there is no reason static methods need be slower on multiprocessor systems). The issue is about the relevance of all this (by the way, none of these issues are in a peak coverage part of the code - i.e. most of these are in management code paths and not performance critical paths).

Yes storing XXX.length() in a local variable will (a) eliminate the Vtable lookup and (b) eliminate the method call. You will save about 2 opcode's duration - typically in the order of a couple of microseconds on a very slow machine. The same goes for the other concerns - whichever way we are talking about micro optimisations - i.e. changes that will enhance the system's performance by couple hundred microseconds max.

The irony here - and the thing that made me chuckle - is that the network latency to perform a request via CORBA (same code execution path as the one where these optimisations were suggested on) is in the order of several milliseconds on a very fast WAN. Then we have the issue of throughput - every request will send at least a couple kilobyte data. This will take a finite amount of time - on a 1Mbps link this will typically be in the order of 10 or so milliseconds, give or take a few. Then there is the time to build and parse these CORBA objects that need to be serialized/deserialized. That takes a significant amount of time - in the order of a couple hundred milliseconds.

What is my point? Why waste time, money and effort on saving 100 microseconds off the critical path in your system if you have overhead that runs into seconds? Much rather spend the time and money on upgrading the remote link, on optimising the data structures as to send less data etc.

Pareto had it right when he suggested (applied to this context) that by spending your time on 20% of the critical code you can attain 80% of the benefits in performance.

The trick is to find the critical 20% of the code. It is clearly NOT the micro optimisations. Especially if you have to do this at the cost of reliability.