Deductive reasoning defined

Deductive reasoning refers to the process where one derives a conclusion (C) starting with a known (or assumed) set of premises (P). An example may illustrate this better:

P: Assume all men will die someday

P: Assume bin Laden is a man

C: bin Laden will die someday

This deductive step was based on the logical principle that if A implies B, and A is true, then B is true.

Deductive reasoning can fail. This is seen in the following famous paradox:

Let

a = b

Thus,

a2 = ab

a2 + a2 = a2 + ab

2a2 = a2 + ab

2a2 - 2ab = a2 + ab - 2ab

2a2 - 2ab = a2 - ab

Rewrite this as:

2(a2 - ab) = 1(a2 - ab)

Dividing both sides by (a2 - ab) gives:

2 = 1

QED.

See the problem with this line of reasoning? This is a very good example that one cannot apply deductive reasoning blindly for mathematical proofs.

Hint: Substitute the original assumption back into the numerator...

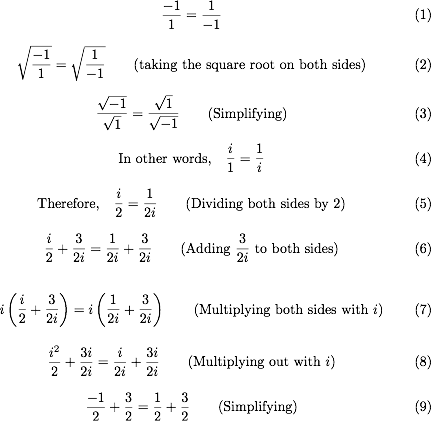

Another, much more subtle example is given below. See if you can find the error made in the reasoning:

Which reduces to 1 = 2 (Adding terms)

QED.

Hint: Do not make any assumptions about algebra other than those you have explicitly seen proofs for.