Building a RAID5 array

I had a huge problem. Since I am an avid photographer, I started building up a huge library of photos. I do not like archiving photos and delete them from my main library, since I like to have them all available for display.

The problem is that my library started pushing 200GB. It grows by about 100GB each year. I already have two 400GB HDD's in my G5 (RAID1), but with the OS and other applications I was running out of disk space. Since bigger hard drives are not readily available, I had to look for some form of external storage. I'll explain in this blog what my options were and why I dediced to build my own RAID array.

The easy way out would be to purchase a LaCie RAID system, but that would have put me back about R32,000. It can only provide 1.5TB of disk space (if using 500GB drives in RAID5). Furthermore, the speed is limited by the Firewire 800 interface (although fast, with large RAID5 arrays on SATA it is possible to go well over 100MB/s). Another option would have been the Apple XServe RAID. But that costs R60,000 for 750MB RAID5. Yeah it is massively scalable up to insane capacities, but it is just too expensive (and noisy). So I decided to look into building my own RAID array. Why? Because I already had a nice desktop Pentium 4 3.2GHz machine with 2GiB RAM. Since the case can only handle 4 hard drives, and ventilation sucked I decided to replace it too. Fortunately my power supply is rated at 500W - keeping many hard drives spinning requires lots of power.

Case

My requirements for a proper case were as follow:

- It had to be sturdy - it will carry lots of weight

- Ventilation should be exceptional - but quiet

- It should support at least 8 hard drives, preferably 10

- It should not be too expensive

- It should be in a normal Desktop form factor - not a server chassis

I could only find one case matching those requirements - the CM Stacker.

IDE or SCSI?

Traditionally IDE is seen as inferior, larger capacity and cheap when compared to SCSI. With the advent of SATA based IDE, things are slowly changing. In my mind the only real benefit SCSI still has is superior mechanics in the hard disk drives themselves. The ballbearings lasts longer and the MTBF of the drives are just much better. It is however changing with the introduction of the Western Digital Caviar RE2 with a MTBF figure of 1.2 million hours. And that is exactly why I bought four WD4000YR drives. I have an intense disgust with the onboard SATA controller when using RAID. I lost all my data 3 times now with RAID1 on various SATA chipsets built in to some modern motherboards. That is why I went shopping for a robust, hardware based SATA RAID controller. My requirements were:

- Support for SATA hardware RAID5

- Native support in the Linux kernel

- Support for up to at least 8 devices

- PCI-X since I do not have PCIe

There were two cards that got my attention - the Adaptec 2810SA card and the 3Ware 9000 series. Both seemed to be excellent cards, and it was a matter of availability that caused me to purchase the Adaptec.

Putting it all together

So to put it all together, I used a Gentoo Linux installation (nothing else is really an option - Gentoo Linux is simply the best distro there is and whether to use Linux or not is not even a question). I recompiled my kernel with the aacraid SCSI driver enabled and other usual SCSI support enabled. I then grabbed the command line utility from Adaptec's CD - this allows me to interact with the controller. This is extremely useful to interrogate it for status, or to silence the alarm when a drive fails. It is called aacapps and is available as a rpm package. Just be careful, when running Gentoo on 2.6 you will get the error when running /usr/sbin/aaccli:

CLI > open AAC0

Executing: open "AAC0"

Command Error: <The requested controller does not exist.>

That is because the /dev/aac0 entry is missing. Just copy it from /usr/sbin/aac0. You should then see:

CLI > open AAC0

Executing: open "AAC0"

and then

>AAC0> container list Executing: container list Num Total Oth Stripe Scsi Partition Label Type Size Ctr Size Usage C:ID:L Offset:Size

----- ------ ------ --- ------ ------- ------ -------------

0 RAID-5 1.08TB 256KB Valid 0:00:0 64.0KB: 372GB /dev/sda Nybble 0:01:0 64.0KB: 372GB 0:02:0 64.0KB: 372GB 0:03:0 64.0KB: 372GB

Obviously your details will vary, but at least you can interact with the controller. The final version looks as follow:

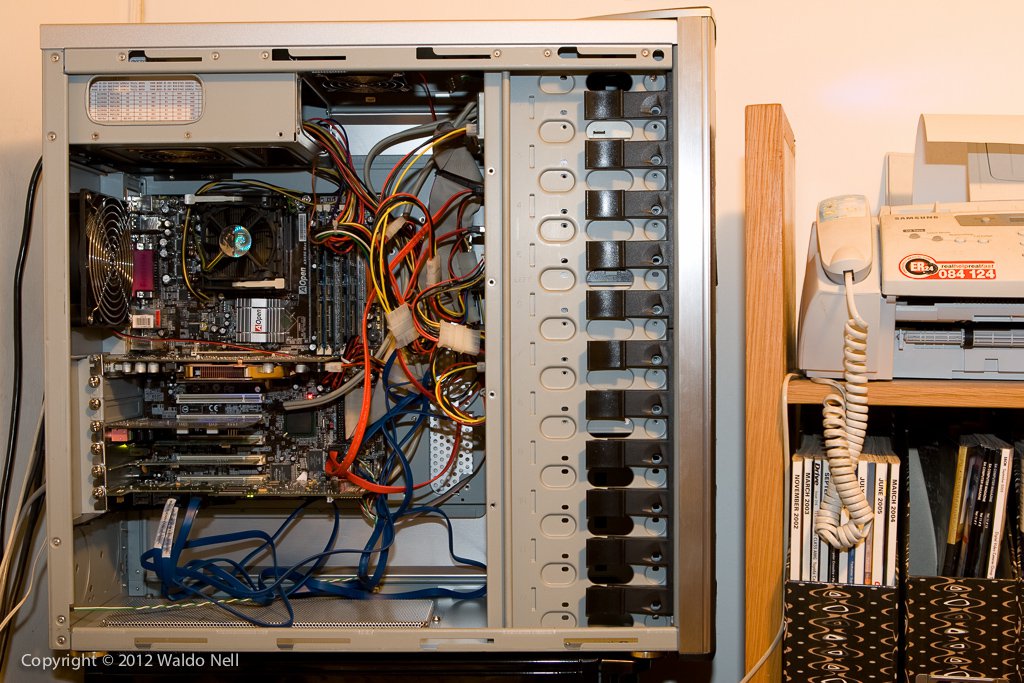

And with the cover removed: